Voice by default: Using AI to navigate the safety net

Our editorial promise

All of our Propel editorial content meets our high bar for accuracy, timeliness, trust, and relevance. Our pages are edited and fact-checked to make sure we meet our mission of giving you information you can rely on.

Learn more about our editorial standards.Propel recently brought the entire team together in San Diego, where we spent a day in person with 40 local SNAP beneficiaries. We set up a series of hands-on, in-person research booths testing ideas across the product, and compensated participants for their time and travel.

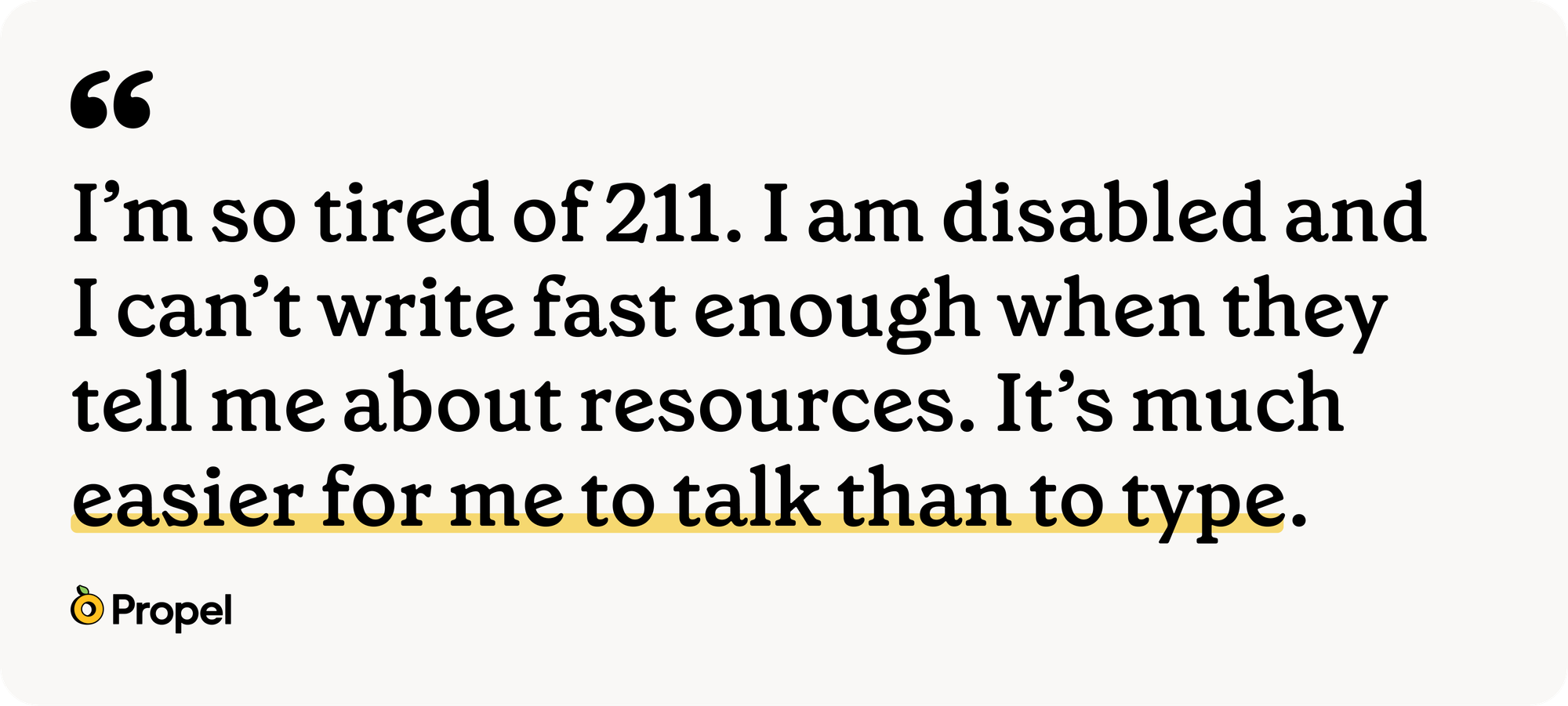

Our booth focused on how Propel users engage with common AI chat interfaces - do they use it, how do they currently use it, have they ever used it to help them navigate the SNAP program, and what fears or hesitations do they have? We went in wanting to understand needs, barriers to entry, and current behavior. We came back with a long list of insights, but the most consistent finding had nothing to do with prompts, accuracy, or hallucinations - or even trust. It was about modality.

Our first observation was that people didn’t know where to start - “I don’t know what to ask.” Many of the research participants who had been staring blankly at the text field (visibly composing, deleting or asking for help from their child) easily engaged the microphone and leaned into the experience. The voice channel was where the actual conversation happened.

Across the board, users reported wanting to avoid reading long, overwhelming responses. They didn't want to type the questions or had trouble typing on a computer. The moment voice mode was available, the friction collapsed.

This strongly aligns with human-computer interaction research (listed below) for the better part of two decades. Now the stakes are higher than they have ever been, because conversational AI is rapidly becoming the layer through which people access information, services, and benefits.

How much a system asks of you before it gives anything back matters. The time, the tech comfort, the fluency, all part of what it takes to get through the process and come away with something useful. Most AI evaluation right now focuses on what comes out of LLMs: are they accurate, how often, do they reason well? The aha moment from these interviews was that the entry path matters too. And that barrier is higher for people in crisis, the people who'd benefit most from using AI to navigate public systems.

The reality of literacy in the United States#the-reality-of-literacy-in-the-united-states

The 2023 PIAAC results — the OECD's flagship Survey of Adult Skills — found that 28 percent of U.S. adults score at literacy Level 1 or below, meaning they can read short, simple paragraphs but struggle with multi-step instructions, comparisons, or low-level inferences ( NCES / OECD, 2024). The U.S. average literacy score has declined 12 points since 2017 — the first statistically significant drop since the survey began ( USAFacts, 2025). Two-thirds of adults with low literacy are U.S.-born. They are not, by and large, in the data product teams use to make modality decisions.

For populations served by means-tested federal programs — SNAP, Medicaid, TANF, WIC — the prevalence of these barriers is higher. A study of SNAP-eligible adults found that only 37 percent had adequate health literacy to engage with standard nutrition program materials ( Rivera et al., 2019, Nutrition Reviews). Low health literacy is a well-documented predictor of worse outcomes across nearly every domain of life that the government touches: chronic disease management, medication adherence, hospitalization rates, and use of preventive care ( Chesser et al., 2021, Health Literacy).

When a product designed to help people navigate a complex public program presupposes the ability to read and write fluent English on a small screen, it has silently designed the most vulnerable users out of the product.

Device assumptions#device-assumptions

The second assumption worth interrogating is the device. Pew's most recent national survey (January 2026) found that roughly one-third of adults in households earning under $30,000 a year are "smartphone-dependent" meaning they own a smartphone but do not subscribe to home broadband ( Pew Research Center, 2026). For SNAP recipients, who by program eligibility must fall under 130 percent of the federal poverty line, that share is higher still. The interface for AI is a phone, often one with a cracked screen, with limited data, shared with family, used while doing something else.

Typing on a touchscreen is not a neutral act. Stanford and Baidu researchers established a decade ago that speech recognition is three times faster than typing on a smartphone keyboard in English, with a 20.4 percent lower error rate during entry ( Ruan et al., 2016). That was 2016, on a deep learning system several generations behind what's deployed today. The gap has not narrowed since, it has widened.

For a user who is not a fluent typist, on a small screen, in a noisy environment, with two minutes between obligations, the choice between text and voice isn't really a choice. Better AI outputs rely on more finely written prompts, which require more typing. The alternative is to accept worse answers, which quietly confirms to users their suspicion that AI isn’t for them. Either path leads to the same user outcome of disengagement. Voice is the only modality that resolves this conflict.

Two decades of research to build upon#two-decades-of-research-to-build-upon

Voice as the preferred modality for low-literacy users, low-vision users, users with dexterity limitations, and older adults is among the most replicated findings in HCI.

Microsoft Research's synthesis on UI design for low-literate users observed that low-literate populations "avoid complex functions, and primarily use mobile phones for voice communication only" ( Medhi et al., 2011, Microsoft Research). Sherwani and colleagues at Carnegie Mellon's Healthline project demonstrated that speech-based access to health information worked for low-literate users in contexts where text-based alternatives didn't ( Sherwani et al., 2007). Agarwal and colleagues at IBM India built explicit voice-plus-graphical interfaces "to break the barrier of illiteracy" ( Agarwal et al., 2013, INTERACT).

The same pattern holds among older adults. Chung et al. (2024) synthesized findings from two studies of low-income, primarily African American older adults using smart speakers and reported broadly positive experiences with hands-free interaction, with the important caveat that voice interfaces need to be customizable for the speech variations introduced by chronic conditions, dental health issues, and post-stroke articulation ( Chung et al., 2024, Innovation in Aging). The Talk2Care work on LLM-based voice assistants for older adults found that voice meaningfully reduced the cognitive load of communicating with healthcare providers ( Yang et al., 2024). A 2025 study in Frontiers in Computer Science found that voice input reduced initial learning costs for novice older adult users specifically because it bypasses the need to acquire a touchscreen mental model ( Wang et al., 2025).

For users with dysarthric speech, limited mobility, low vision, or low literacy, voice technologies have been characterized as one of the highest-leverage accessibility interventions of the digital age ( Voiceitt / Karten Network, 2024, Assistive Technology; Delić et al., 2014; Fager & Burnfield, 2015).

Voice mode should not be an experimental feature. Conversational AI is collapsing once-distinct system access patterns into a single substrate. If you can talk to an AI, you can navigate a benefits program. The same channel that lets someone navigate SNAP also handles prescription refills, school questions, billing disputes and EITC eligibility checks.

Natural language#natural-language

Spoken language is closer to how people actually think. Users will speak in full thoughts when they will not type them. The natural language understanding underlying contemporary LLMs handles spoken inflection, hesitation, vernacular, and code-switching far more gracefully than a text input field's blinking cursor suggests.

The user who types "i need food help" gets a generic response, gives up, and concludes that AI "isn't for them" at exactly the moment in history when AI is becoming the cheapest and most patient interlocutor a person could possibly have.

We saw a particularly stark example of this in our observations. A user looking for resources for their service animal typed in a question phrased as a search term “food for pets.” The AI returned generic information about low income services for animals, without any acknowledgement that service animal support might have special grant programs. The right answer required them to utilize a precise legal term that they were trying to discover in the first place. The prompt required familiarity with the answer is invisible to AI models and policy makers, but for the populations we should design for, it is central to their frustration. AI systems can do the work of disambiguating, clarifying, and routing, but only if the user has the ability to prompt the right conversation.

Ideas for building AI products that work for SNAP beneficiaries#ideas-for-building-ai-products-that-work-for-snap-beneficiaries

Voice mode cannot be an afterthought - it must be available by default, or at minimum, equal in prominence to text, across any AI chat interface aspiring to a non-trivial user base. Coming out of our user research day, these six strategies could significantly improve access for the safety net beneficiary population:

- Balance voice access visually Microphone affordance should be the same size and visual weight as the text input field, not a small icon tucked next to it.

- Enable voice by default Voice should be easily reachable, and the microphone permission flow needs to be thoughtfully designed (asking in-context rather than upon initial load, with a heads up before the system prompt, and helpful fallbacks if a user doesn’t grant system permission).

- Voice output, or text-to-speech response should be paired with voice input by default on mobile, because reading the response is not free either. Voice mode should not be quietly downgraded behind a paywall or a "premium" tier; for populations that depend on AI for high-stakes navigation of public benefits, healthcare, or education, putting the most accessible modality behind a subscription is a regressive product strategy.

- Consider limited data usage constraints in the design for low income populations. Process data on device or consider wifi gating as an option to prevent huge data downloads users aren’t anticipating.

- Evaluate speech models with real user data Speech models should be evaluated on the speech of the actual user base not the speech of the engineering team. Customization for accent, dialect, post-stroke articulation, dental variation, and hesitation patterns is a first-class accessibility requirement, not an edge case ( Chung et al., 2024; Voiceitt / Karten Network, 2024).

What we heard in San Diego is what the literature has been saying for two decades: the people most likely to benefit from a knowledgeable, patient, available-on-demand digital assistant are also the people least likely to have the time, dexterity, literacy, or broadband to type their way to one. Voice doesn't solve every problem in that gap. It removes one of the largest and most stubborn ones.

If you’d like to explore the design and testing use of voice in AI-driven benefits navigation, please reach out!

References#references

Agarwal, S. K., Grover, J., Kumar, A., Puri, M., & Singh, M. (2013). Visual conversational interfaces to empower low-literacy users. In Human-Computer Interaction – INTERACT 2013. Springer. https://link.springer.com/chapter/10.1007/978-3-642-40498-6_67

Chesser, A. K., Keene Woods, N., Smothers, K., & Rogers, N. (2021). Health literacy barriers in the health care system: Barriers and opportunities for the profession. Social Work in Public Health. https://pmc.ncbi.nlm.nih.gov/articles/PMC8453407/

Chung, J., Mansion, N., Viceconte, M., Syros, R., & Winship, J. (2024). User challenges and preferences for voice interface design on smart speakers among low-income minority older adults. Innovation in Aging, 8(Suppl 1), 581–582. https://pmc.ncbi.nlm.nih.gov/articles/PMC11691214/

Delić, V., Sečujski, M., Jakovljević, N., Pekar, D., Mišković, D., Popović, B., Ostrogonac, S., Bojanić, M., & Knežević, D. (2014). Speech and language resources for low-literate users. IEEE 9th Symposium on Applied Computational Intelligence and Informatics (SACI).

Fager, S. K., & Burnfield, J. M. (2015). Patients' experiences with technology during inpatient rehabilitation: Opportunities to support independence and therapeutic engagement. Disability and Rehabilitation: Assistive Technology.

Knoth, N., Tolzin, A., Janson, A., & Leimeister, J. M. (2024). AI literacy and its implications for prompt engineering strategies. Computers and Education: Artificial Intelligence, 6. https://www.sciencedirect.com/science/article/pii/S2666920X24000262

Lee, V. R., Pope, D., Miles, S., & Zárate, R. C. (2023). Prompt literacy: A key for AI-based learning. Educational Leadership / ASCD. https://www.ascd.org/el/articles/prompt-literacy-a-key-for-ai-based-learning

Medhi, I., Patnaik, S., Brunskill, E., Gautama, S. N., Thies, W., & Toyama, K. (2011). Designing mobile interfaces for novice and low-literacy users. ACM Transactions on Computer-Human Interaction. Microsoft Research. https://www.microsoft.com/en-us/research/publication/user-interface-design-for-low-literate-and-novice-users-past-present-and-future/

National Center for Education Statistics / OECD. (2024). Program for the International Assessment of Adult Competencies (PIAAC), Cycle 2, 2022–2023: U.S. Results. https://nces.ed.gov/whatsnew/press_releases/12_10_2024.asp

Pew Research Center. (2026). Internet use, smartphone ownership, and digital divides in the U.S. https://www.pewresearch.org/short-reads/2026/01/08/internet-use-smartphone-ownership-digital-divides-in-u-s/

Rivera, R. L., Maulding, M. K., & Eicher-Miller, H. A. (2019). Effect of Supplemental Nutrition Assistance Program–Education (SNAP-Ed) on food security and dietary outcomes. Nutrition Reviews, 77(12), 903–921. https://academic.oup.com/nutritionreviews/article/77/12/903/5488130

Ruan, S., Wobbrock, J. O., Liou, K., Ng, A., & Landay, J. A. (2016). Speech is 3x faster than typing for English and Mandarin text entry on mobile devices. arXiv:1608.07323. https://hci.stanford.edu/research/speech/

Sherwani, J., Ali, N., Mirza, S., Fatma, A., Memon, Y., Karim, M., Tongia, R., & Rosenfeld, R. (2007). HealthLine: Speech-based access to health information by low-literate users. International Conference on Information and Communication Technologies and Development (ICTD).

Voiceitt / Karten Network. (2024). Developing accessible speech technology with users with dysarthric speech. Assistive Technology. https://www.tandfonline.com/doi/full/10.1080/10400435.2024.2328082

Walter, Y. (2024). Embracing the future of artificial intelligence in the classroom: The relevance of AI literacy, prompt engineering, and critical thinking in modern education. International Journal of Educational Technology in Higher Education, 21. https://link.springer.com/article/10.1186/s41239-024-00448-3

Wang, X., et al. (2025). The impact of usage experience and input modality on trust experience and cognitive load in older adults. Frontiers in Computer Science. https://www.frontiersin.org/journals/computer-science/articles/10.3389/fcomp.2025.1659594/full

Yang, Z., et al. (2024). Talk2Care: An LLM-based voice assistant for communication between healthcare providers and older adults. arXiv:2309.09919. https://arxiv.org/abs/2309.09919